Computer vision has long ceased to be something out of science fiction. Today, it helps cars recognize pedestrians, doctors analyze MRI images, and stores understand what products are on their shelves. And in all these scenarios, the heart of the technology is image annotation and data segmentation.

To put it simply, computer vision needs to “understand” what it is seeing. To do this, the image is broken down into parts — pixels are labeled, each corresponding to a specific object or area. But for artificial intelligence to not only see, but also understand where one object begins and another ends, a special technique is needed: instance segmentation.

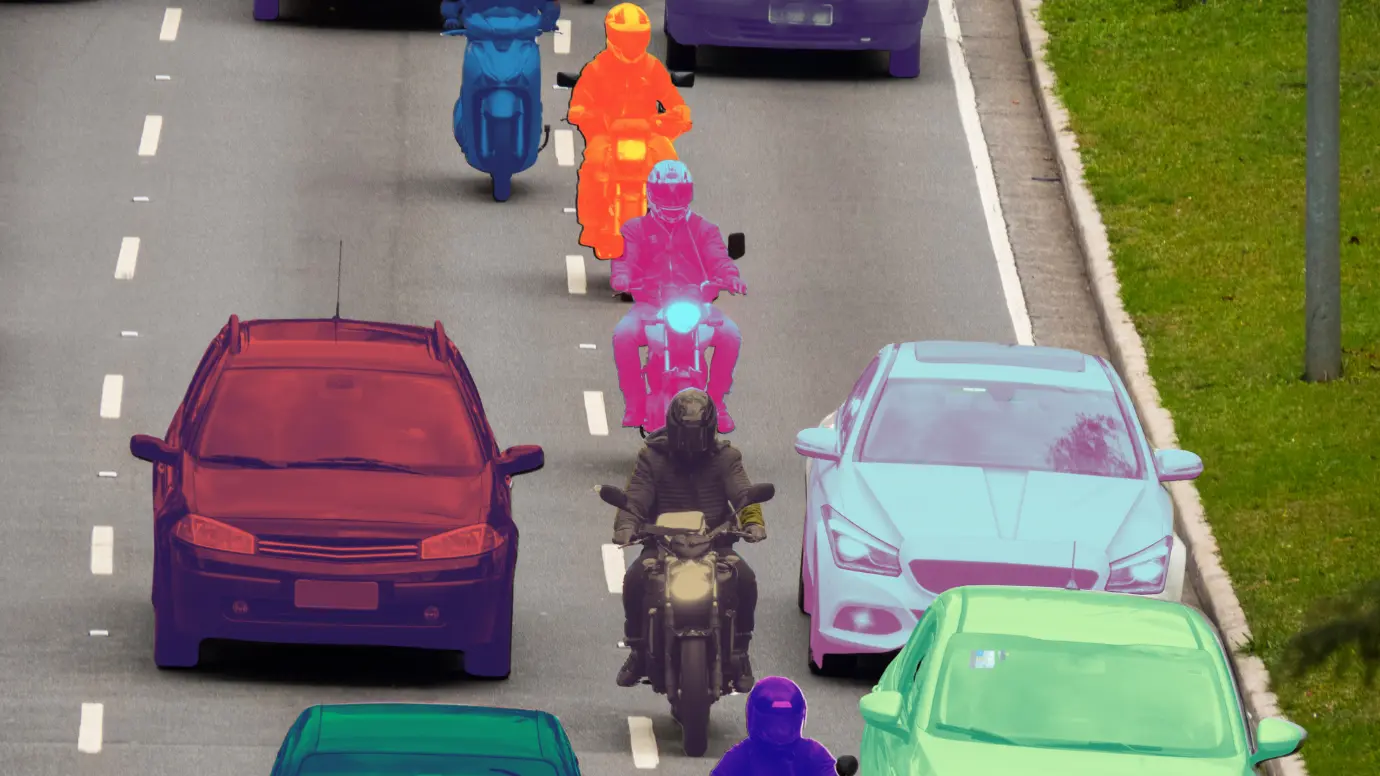

Instance segmentation is a method that allows not only detecting objects, but also highlighting each instance separately. For example, if there are five cars in a photo, the algorithm does not simply say “here are the cars,” but circles each of them, distinguishing between them. That is why instance segmentation in computer vision is considered one of the key areas of development for artificial intelligence today.

What Is Instance Segmentation?

When people talk about instance segmentation, they often imagine complex neural networks, graphs, and algorithms. But if you strip away all the technical layers, the essence of this technology is surprisingly simple: it teaches machines to see the world in real detail, distinguishing not just categories of objects, but each individual unit.

Clear Definition

The instance segmentation method is based on the idea that each object in an image must be recognized and outlined individually. This is not simply classification by category — the algorithm must understand each instance separately. For example, if there are ten dogs in a photo, the algorithm does not simply see “dog” as a general class. It sees ten specific dogs, distinguishes them from each other, and understands where one ends and another begins.

Each figure is assigned its own “mask” — a set of pixels that accurately describes the shape and position of the object.

Unlike simpler methods, such as semantic segmentation, no averaging or approximation is used here. The machine must literally “trace” the object as a human would do with a pencil.

This accuracy is especially important in areas where contours are critical: medical imaging, where it is necessary to accurately separate a tumor from healthy tissue, drone and video stream analysis, where objects move quickly and may overlap.

Experts emphasize that instance segmentation is not only about object selection, but also about understanding the scene at the level of detail.

For example:

- In medical images, the algorithm can select individual cells or anatomical structures, even if they are located very close to each other.

- In a traffic scene, each car, cyclist, and pedestrian is assigned a separate mask, which allows for accurate prediction of movement trajectories.

- In retail, the system sees individual products on the shelf, distinguishing identical packages from each other, which is impossible when using a bounding box.

Thus, Clear Definition instance segmentation can be summarized as follows: it is machine vision capable of seeing and distinguishing each object as a separate entity, preserving its shape, position, and boundaries in the finest detail.

This makes the method a universal tool for accurate, critical, and complex tasks in the real world, where every detail matters.

Difference from Semantic Segmentation

To understand how advanced instance segmentation is compared to other methods, it is worth comparing it to Semantic Segmentation, an earlier approach.

Semantic segmentation also labels every pixel in an image, but does so by category. If there are five cats in a picture, it will paint all of their areas the same color and label them “cat.” The problem is that the algorithm does not distinguish between one cat and another.

For it, it is one common mass of “cat pixels.” This approach is suitable for tasks where the individuality of objects is not important, for example, when calculating the total area of vegetation. But if you need to count the number of individual objects, determine their position or interaction between them, Semantic segmentation is blind. This is where instance segmentation comes into play: it sees what other methods miss — the differences between objects within the same category.

Difference from Object Detection

On the other hand, there is Object Detection, or simple “object recognition.” This method has long been the workhorse of computer vision. It determines what is depicted and where it is located, but it does so using rectangles — bounding boxes. This is convenient and fast, but crude. When there are several objects in the image that partially overlap each other, the frames begin to overlap, and the algorithm loses accuracy.

It knows that there is a car in front of it, but it does not understand where the hood ends and the background begins.

Instance segmentation, on the other hand, sees everything at the pixel level. It is not just a frame around the car — it is the contour of the body, the exact shape of the headlights, the boundaries of the windows. This level of accuracy makes it possible to analyze scenes not as a set of rectangles, but as a living, multi-layered space.

Illustrative Example

Imagine a frame from the camera of a driverless car. There are dozens of cars, bicycles, pedestrians, and road signs on the road. Object Detection will draw a rectangle around each car and say, “There’s a car here.” Semantic Segmentation will cover the entire area of cars with one color, “vehicle.” But only instance segmentation will show each car separately, outlining each one, even if they are side by side or partially obscuring each other.

It doesn’t just see “transport” — it understands that there are five different cars in front of it, with unique shapes and positions. That’s why instance segmentation has become the standard for “next-level vision” in autonomous systems, medical diagnostics, robotics, and even agricultural analysis.

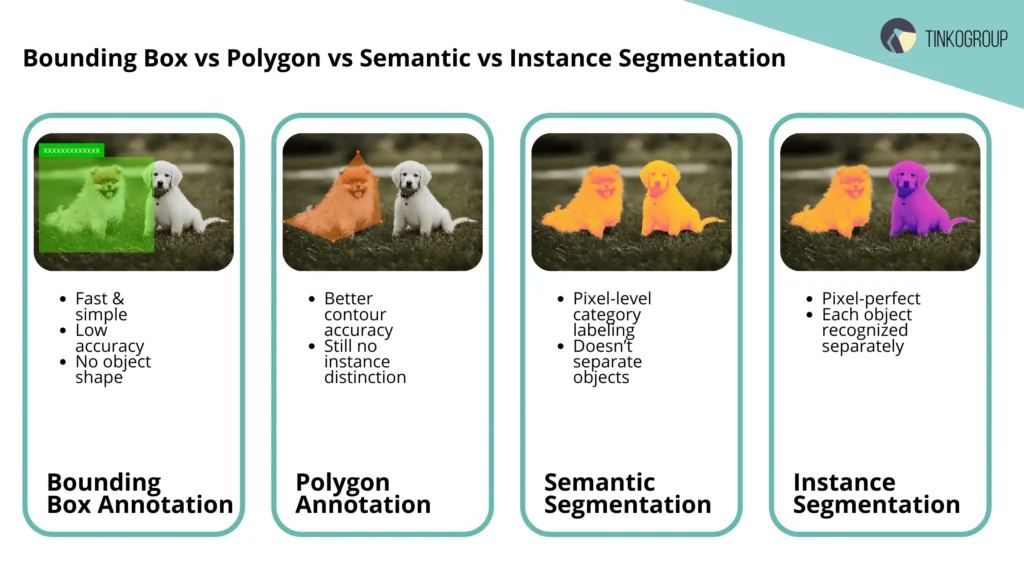

Instance Segmentation vs Other Annotation Techniques

When talking about image annotation, it is important to understand that instance segmentation is not the only way to teach a machine to see.

It is the culmination of a whole range of techniques, each of which solved the problem in its own way. To truly appreciate why this method has become key to computer vision, we need to look at its neighbors: Bounding Box, Polygon Annotation, and Semantic Segmentation.

Bounding Box Annotation

Bounding box annotation is the oldest and simplest way to designate an object. A human annotator draws a rectangle around the object, and the system learns to recognize it by its coordinates.The method is fast, inexpensive, and ideal for tasks where only the presence of an object is important, not its shape. But this simplicity comes at a price: the frame rarely coincides with the outline of the object.

It will always include part of the background, other objects, or empty space. For training machine learning models where accurate contours are critical (e.g., medical diagnostics or robotics), this level of roughness is unacceptable. Bounding boxes are useful for getting started, but not for depth.

Polygon Annotation

Polygon annotation emerged as an attempt to overcome the limitations of rectangles. The annotator manually connects points, creating a contour around an object of any shape. This allows you to accurately select even objects with curves, such as leaves, tools, or animals.

However, despite its high accuracy, this method still does not allow the machine to distinguish between individual instances of the same class. For the algorithm, it does not matter whether it is one object or several similar ones nearby.

In addition, manual polygon creation requires more time and skill, which makes the process expensive when scaling.

Semantic Segmentation

What about semantic segmentation vs instance segmentation? Semantic segmentation is a step forward in “understanding” the scene. Each pixel in the image segmentation in AI is assigned a category label: “person,” “road,” “house,” “tree.” These are no longer frames or contours — this is a “map of meanings” in which each part of the image is given its own meaning.

But this approach has a key limitation: semantic segmentation does not see the differences between objects of the same type. All cats, people, or cars are one continuous area for it.

This approach is ideal if you just need to divide scenes into classes (e.g., background and objects), but it is useless when you need to count individual instances or track their movement.

Why Instance Segmentation Is a Step Forward

Instance-level segmentation combines the best features of previous methods and brings the idea of annotation to perfection:

- like semantic segmentation, it works at the pixel level;

- like polygon annotation, it preserves the accuracy of contours;

- and, unlike all previous methods, it distinguishes each individual unit of a class.

As a result, the system doesn’t just see “five cars” or “a section of road” — it knows exactly where each one is, even if the objects overlap. This level of detail opens the door to complex tasks: autonomous driving, surgical AI assistants, industrial robots, and intelligent video analysis.

Advantages and Limitations

Like any complex technology, instance segmentation is not a silver bullet.It offers incredible accuracy, but requires enormous resources. It combines the strengths of deep analysis with the weaknesses of any system that depends on data quality and computing power.

To understand the real value of the approach, it is useful to consider both sides — what makes it revolutionary and what is currently holding back its widespread use.

Advantages:

- Pixel accuracy. The algorithm “sees” an object down to the last pixel, ensuring the highest accuracy.

- Distinguishing between identical objects. Even if there are dozens of similar objects in an image, each one will be identified as a separate unit.

- Rich training data. Instance segmentation training data contains much more useful information than frames or polygons.

- Flexibility of application. Suitable for medicine, agriculture, e-commerce, robotics, and many other fields.

Limitations:

- Resource intensity. Markup requires a large number of skilled specialists and time.

- High computing power requirements. The models are complex, and training takes weeks.

- Difficulties with quality control. Pixel-level errors are difficult to detect and correct manually.

Comparison table:

| Annotation technique | Level of accuracy | Distinguishes objects of the same class | Annotation speed | Application |

| Bounding Box | Low | No | High | Basic object detection |

| Polygon Annotation | Average | Partially | Average | Precise contour marking |

| Semantic Segmentation | High (by category) | No | Average | Classification by pixels |

| Instance Segmentation | Maximum (pixel) | Yes | Low (requires time) | Autonomous systems, medicine, robotics |

Key Applications & Industries

When it comes to instance segmentation, it’s easy to get lost in dry technical definitions and abstract descriptions of algorithms. In practice, its value is evident in its application: where it is critical to see objects not as abstract categories, but as specific, separate elements, every detail matters.

Experts working with annotated data note that instance segmentation is changing the approach to analysis and automation in a wide variety of industries, opening up opportunities that were only dreamt of a few years ago. Below are the key areas where the technology is already delivering tangible results.

Autonomous Vehicles

In driverless cars, every second on the road is critical. Systems must recognize cars, pedestrians, cyclists, traffic lights, and road signs, as well as predict their behavior.

Instance segmentation allows the machine to “see” all traffic participants, distinguishing them even in a dense crowd or when objects are partially obscured.

For example, at a busy intersection, the algorithm can accurately outline every bicycle, car, or motorcycle, which is critical for preventing accidents.

Experts note that even a small inaccuracy in determining the boundaries of an object can lead to an incorrect prediction of its trajectory and, consequently, to potential incidents.

In addition to road conditions, instance segmentation helps in parking systems, in nighttime shooting situations, and in difficult weather conditions when traditional bounding box algorithms lose accuracy.

Healthcare

In medical imaging, accuracy is a matter of life and death. With instance segmentation, doctors and researchers can identify tumors, organs, and individual cells in CT, MRI, and microscopic images. Conventional segmentation methods do not distinguish between individual instances: all objects of the same type are considered as a single entity.

Experts emphasize that accurate segmentation of individual tumors or lesions allows you to:

- monitor the dynamics of tumor growth or shrinkage over time;

- plan surgical interventions with high precision;

- improve algorithms for biomarker analysis and treatment prediction.

Even in biological research, the accuracy of pixel segmentation allows the identification of individual cells or tissue parts, which is impossible when using bounding boxes or semantic segmentation.

Retail & E-commerce

Visual analysis of products plays a key role in retail and e-commerce. Systems are capable of recognizing products on shelves, supporting visual search, analyzing product placement, and checking displays. A practical example: the algorithm can calculate how many units of each product remain on the shelf, identify missing items, detect incorrectly placed goods, and even recognize similar packaging with different barcodes.

Experts note that this level of detail improves inventory management accuracy, helps optimize store employee performance, and enhances the customer experience, which is not possible when using bounding boxes or semantic segmentation.

In addition, instance segmentation facilitates visual search in online stores: the system understands the exact contours of a product, not just its category, which increases the relevance of results for buyers.

Agriculture

Modern farmers are increasingly using instance segmentation to monitor fields and control plant health. Drones photograph fields from above, and algorithms identify each plant, estimate yield, and detect signs of disease or pests.

Even if the plants grow very close to each other, the system distinguishes each instance.

Experts note that this accuracy allows for precise planning of fertilizer application and pest control, as well as crop yield forecasting with much less error than traditional manual monitoring or the use of bounding boxes.

The use of instance segmentation in agricultural analytics expands the capabilities of remote observation and allows data to be integrated with other AI tools, for example, to automatically assess planting density or identify problem areas in the field.

Robotics & Manufacturing

In industry, robots must not only see objects, but also understand their shape, position, and orientation in space.

Instance segmentation allows robots to:

- accurately grasp objects;

- detect defects and defective parts;

- automate assembly and packaging processes.

For example, on an assembly line, a robot can see each screw and part separately, even if they are located close to each other, which minimizes the risk of errors and increases the speed of work.

Experts note that accurate object segmentation is especially useful for objects with similar shapes that traditional methods may not be able to distinguish, which is critical in the high-precision assembly of electronics or mechanical devices.

In addition, instance segmentation helps monitor production lines in real time, quickly identify anomalies, and automatically redirect defective products for reprocessing, reducing waste and increasing efficiency.

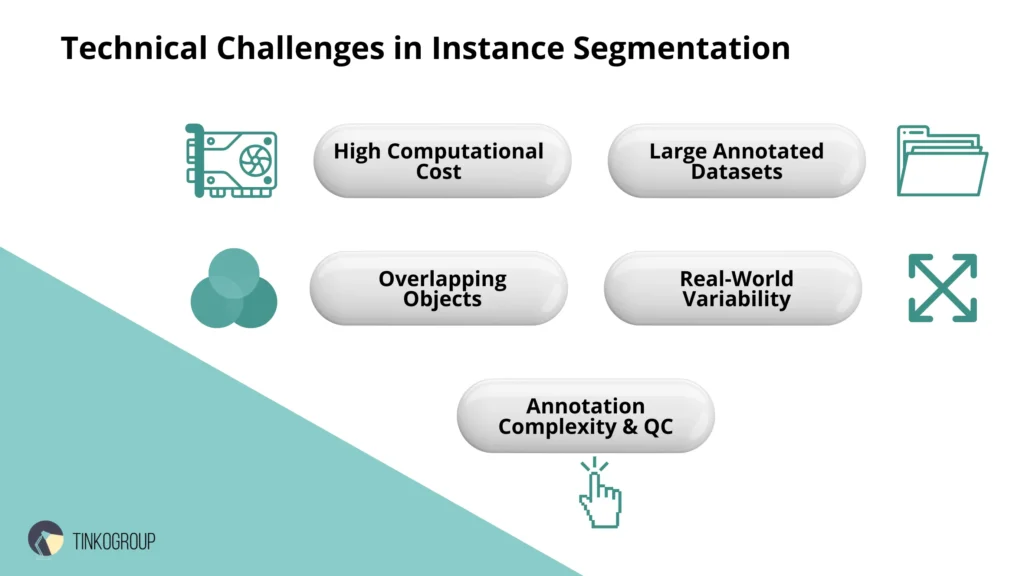

Technical Challenges

Although instance segmentation opens up incredible opportunities for image analysis and process automation, its implementation is accompanied by a number of serious technical challenges.

Experts who work with instance segmentation models on a daily basis know that accuracy and detail require not only resources, but also careful preparation, a strategic approach, and constant quality control.

In this section, we will examine the main challenges in depth and explain why they cannot be ignored.

High Computational Cost

Modern models such as Mask R-CNN, YOLACT, and SOLOv2 provide high pixel-level accuracy, but at the cost of enormous computational load.

Training on large datasets requires high-powered graphics processors and thousands of hours of computation. However, high costs can be mitigated by using Prepared AI Datasets for faster prototyping, allowing teams to test models without initial heavy investments in custom labeling.

Experts note several specific problems:

- Real-time inference. Many models are accurate but slow. For autonomous vehicles or robotics, the system needs to process frames in milliseconds, otherwise safety risks increase.

- Balance between accuracy and speed. Speeding up the process often leads to a loss of segmentation quality, especially in complex scenes with multiple overlapping objects.

- Energy consumption and cost. Large computing farms require significant resources, which increases the operating costs of companies using AI technologies.

Need for Massive Annotated Datasets

Model accuracy directly depends on the volume and quality of training data.

Instance segmentation in computer vision requires thousands of images, where each object is marked in detail at the pixel level.

Practical difficulties include:

- Complexity of manual annotation. If an object has a complex shape, such as tree branches or medical structures, annotators spend hours on each image.

- Multi-stage quality control. Errors in labeling can lead to incorrect model training and critical errors in operation.

- Shortage of rare classes. For example, for autonomous transport, it is difficult to collect a sufficient number of images of emergency situations or rare objects, which reduces the versatility of the model.

Experts often use mixed approaches: they combine real data with synthetic images and also use AI-assisted annotation, where algorithms suggest preliminary masks and humans correct them. This reduces labor costs and improves quality.

Difficulty Handling Overlapping Objects

One of the most difficult tasks is scenes where objects partially overlap each other.

For example:

- In a road scene, a pedestrian may be walking behind a tree, and a bicycle may be partially obscured by a car.

- In medical images, tumors and organs may overlap each other.

In such cases, the model must not only recognize the class, but also correctly identify each instance separately.

Experts share their practical observations: “In the first attempts, objects of the same class constantly ‘stuck together’. Only with additional data and refinement of masks was it possible to achieve separation.”

Methods such as Non-Maximum Suppression with masks are used, as well as training on specially prepared datasets with overlapping objects.

Variability in Lighting, Size, Perspective

The real world is far from laboratory conditions. Objects can be small or large, and shooting can take place in daylight, at night, in rain or snow.

These changes affect the accuracy of the models:

- Lighting: shadows, bright sun glare, or reflections can distort contours.

- Object scale: a small detail in the background may go unnoticed.

- Perspective and shooting angle: objects may be tilted, partially visible, or distorted by the lens.

Experts recommend data augmentation: rotation, scaling, brightness and contrast changes to train the model under a variety of conditions. This makes the system more resilient to real-life situations.

Annotation complexity and quality control

Annotation is not just “circling an object.” For instance segmentation, it is important that each pixel is correctly assigned to an object.

Problems include:

- Human factor. Annotators can get tired and miss small details, especially if the objects are small and numerous.

- Verification complexity. Even an experienced expert may not notice errors at object intersections or boundaries.

- Cost. High accuracy requires multiple levels of control and strict data annotation quality standards.

Practical experience shows that models start to work better when multi-level control is used — several annotators check each other’s work, plus automatic algorithms detect anomalies.

Reliable Data Services Delivered By Experts

We help you scale faster by doing the data work right - the first time

Advances & Future of Instance Segmentation

Over the past few years, instance segmentation has undergone a real revolution.

What seemed impossible ten years ago — training models that can accurately distinguish hundreds of objects in an image — has now become common practice for large AI teams.

Experts working with computer vision note that the modern era of instance segmentation is not only about improving models, but also about new approaches to training, data annotation, and processing complex scenes.

State-of-the-Art Models

Among modern solutions, Mask R-CNN, YOLACT, SOLOv2, and their derivatives occupy leading positions.

- What about object detection vs instance segmentation? Mask R-CNN has become a real breakthrough thanks to its ability to combine object detection and pixel segmentation. Experts note that the model copes particularly well with detailed contours and overlapping objects.

- YOLACT offered an innovative approach to fast real-time segmentation, which is important for robotics and autonomous transportation. It sacrifices a little accuracy for speed, but still remains an effective tool for practical tasks.

- SOLOv2 goes even further, replacing the classic pipeline for Mask R-CNN and creating a unified end-to-end approach that combines accuracy with performance.

These models are actively used in industry, medicine, and the automotive industry, and experts are constantly refining them, adapting them to real-world scenarios and increasing their resilience to non-standard conditions.

Weakly-Supervised and Unsupervised Segmentation

One of the major challenges is the lack of labeled data. Creating thousands of annotated images manually is expensive and time-consuming. This is where weakly-supervised and unsupervised methods come in handy:

- Weakly-supervised segmentation uses limited data, such as only bounding boxes or key points, to train the model to create object masks.

- Unsupervised segmentation allows the system to find object boundaries on its own without explicit labeling.

Experts note that these approaches do not yet completely replace manual labeling, but they significantly reduce labor costs and make it possible to train models on large datasets without direct annotation.

A practical example: startups in the field of autonomous driving use weakly-supervised approaches for rapid training on new types of road scenes, reducing data preparation time from weeks to days.

Synthetic Data and AI-Assisted Annotation

The creation of synthetic data has been a real lifesaver for experts working with instance segmentation training data.

- Synthetic images allow models to learn from scenes that are difficult to collect in real life: emergency situations, rare medical cases, complex industrial scenes.

- AI-assisted annotation is when algorithms pre-create object masks and humans only correct them.

Experts who have worked with large datasets note that this reduces labor costs by 3-5 times and improves quality because the preliminary mask already takes into account complex object boundaries.

This approach is especially in demand in medical imaging, where accuracy is critical and the number of frames is enormous.

Trends

Trends in instance segmentation can be summarized in three key areas:

- Speed and real-time. Models are becoming faster, allowing them to be implemented in autonomous vehicles, drones, robots, and security systems.Experts note that real-time segmentation is no longer a fantasy, but a working necessity, and many older architectures are being optimized for GPUs and TPUs to achieve millisecond response times.

- Scaling to large datasets. With the growth of available images and videos, models are trained on millions of objects, allowing them to cover rare scenarios and increase resistance to shooting conditions.

- Integration with other AI technologies. instance segmentation is increasingly being combined with tracking, 3D reconstruction, and action recognition to create comprehensive solutions for analyzing video and dynamic scenes.

Experts note that the future of instance segmentation is not just about more accurate masks, but the ability to understand the scene as a whole: how objects interact, how they change over time, and how they are affected by external conditions.

Conclusion

Instance segmentation has become one of the key technologies in modern computer vision. It allows systems to see the world at a detailed level, distinguish between objects of the same class, accurately trace contours, and work with complex scenes where traditional methods are ineffective.

Experts note that its value is evident not only in laboratory research but also in real-world applications, from autonomous vehicles and robotics to medicine, agricultural analytics, and retail. Pixel accuracy, the ability to distinguish between objects, and flexibility of application make instance segmentation an indispensable tool for companies that want to put artificial intelligence into practice.

However, implementing this technology requires experience, significant resources, and attention to data quality. This is where professional teams specializing in annotation, model training, and solution integration come in.

Tinkogroup offers comprehensive annotation services and solutions for training machine learning models, including the creation of instance segmentation training data, the use of synthetic data, and AI-assisted annotation. The company helps accelerate the implementation of complex models, reduce labor costs, and improve segmentation accuracy in any industry.

If you want to learn more about how instance segmentation can improve the efficiency of your project and optimize processes, check out our services here: Tinkogroup annotation services.

What is the main difference between Semantic and Instance Segmentation?

While Semantic Segmentation labels all pixels of a certain category (e.g., “car”) with the same color, instance segmentation identifies and outlines each individual object separately. It allows the AI to distinguish between “Car A” and “Car B,” even if they are identical.

Why is instance segmentation more expensive than using Bounding Boxes?

It requires pixel-perfect accuracy. Instead of drawing a simple rectangle, an annotator must create a precise mask for every object. This complexity increases the time spent on each image, though it significantly improves model accuracy for tasks like medical imaging or autonomous driving.

When should I choose instance segmentation over other methods?

Choose it when the exact shape, count, or interaction of individual objects is critical for your model. If you only need to know “if” an object is present, Bounding Boxes are enough. If you need to know exactly “where” each object ends and another begins (especially when they overlap), instance segmentation is the way to go.